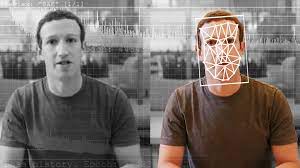

‘Two aspects are involved in our averse reaction to deepfakes: the uncanny feeling of witnessing something abnormal, and the unsettling feeling of being deceived by one’s own eyes.

This first aspect concerns the uneasiness we feel in noticing that something is not ‘quite right’. There is something creepy about deepfakes that represent people in ways that are slightly ‘off’. Early deepfake videos contained unnatural eye-blinking patterns, for example, which viewers would not consciously notice but nonetheless signalled that something strange was going on. As deepfake technology improves and footage looks and sounds more like authentic material, this aspect of eeriness disappears.

But another emerges: can we really trust what we’re watching?

This is the other side of the uneasy feeling that deepfakes arouse, which concerns footage that is too realistic-looking. Here deepfakes cause a sense of uneasiness because they make us distrust what we see with our own eyes. While trust in the reliability of video and images has already been undermined with the ascent of Photoshop and other forms of manipulation, the potential of deepfake technology to continuously improve the convincingness of inauthentic recordings through machine learning deepens the concern over deception.

Not only can deepfake technology realistically represent people’s image and voice, it also allows for impersonation in real time. We can’t assume that the person we see or hear in digital footage is who we assume them to be, even if we seem to be interacting with them….’

— via Psyche

Related: The Biggest Deepfake Abuse Site Is Growing in Disturbing Ways

‘A deepfake website that generates “nude” images of women using artificial intelligence is spreading its murky tentacles across the web—spawning look-alike services through partner agreements and recruiting new users through a referral system. The expansion efforts have allowed the service to proliferate despite bans placed on its payment infrastructure. The website, which WIRED is not naming to limit its amplification, has existed since last year. It digitally “removes” clothing from non-nude photos to create nonconsensual pornographic deepfakes. Researchers say its output is “hyper-realistic,” and unlike similar abusive platforms, it can generate pornographic images even when the person in the original photo is fully clothed. Previously, similar technologies have only worked with partially clothed photographs…’

— via WIRED